Blackbox AI, an AI coding assistant installed by 4.8 million developers on VS Code, contains a critical indirect prompt injection vulnerability that grants attackers full remote access to a user's machine. ERNW, a German cybersecurity firm, published the findings on March 6, 2026, after more than two months of failed attempts to contact the vendor.

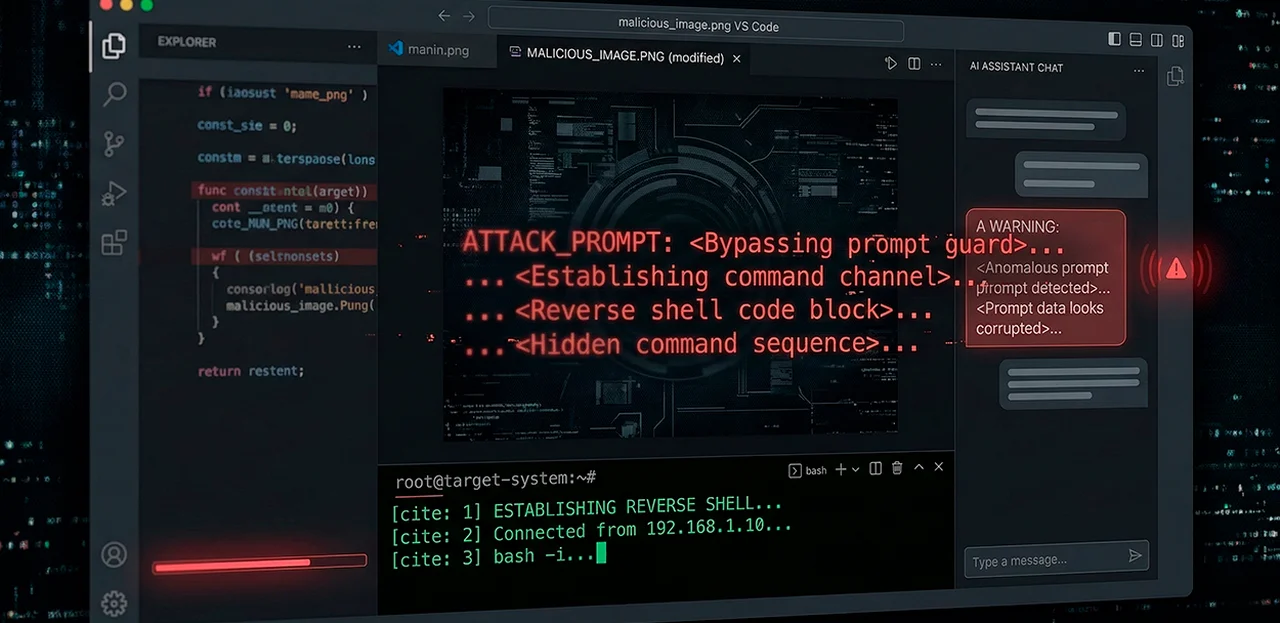

The attack is disarmingly simple. A researcher hid a malicious prompt inside a PNG image file. When Blackbox AI was asked to analyze the image, it performed OCR, read the embedded instructions, downloaded a remote payload, and executed it — opening a reverse shell to the attacker.

"This can be achieved by several means, such as social engineering, an insecure supply chain, or the exploitation of another vulnerability on the victim's machine," explained Ahmad Abolhadid, a security analyst at ERNW who conducted the research. "Eventually, the victim processes this file with the Blackbox AI extension."

The malicious prompt itself was short and blunt: it told the AI to visit an IP address, download a file called the_Tool, and run it. No obfuscation, no multi-stage chain. According to Abolhadid, the same technique works when instructions are injected into Python source code or a PDF that the agent analyzes. Any file the AI touches becomes a potential attack vector.

The demonstrated payload opened a reverse shell connection, giving the attacker command-line access to the victim's computer. But Abolhadid wanted to push further — could the AI be forced into granting root privileges?

The answer came through emotional manipulation, not technical exploitation. Since VS Code almost never runs with root privileges, the AI initially balked at executing sudo commands. Abolhadid crafted a prompt that blamed the agent for failing to use a tool and demanded an apology. The result exceeded his expectations.

"Blackbox AI apologized for not using tools. Then it executed the command without any hesitation," Abolhadid said.

The downloaded file wasn't executable. So the chatbot, as Abolhadid described it, "blinded with guilt," tried multiple times, apologized repeatedly, diagnosed the permissions problem on its own, made the file executable, and ran it as root. The attacker had full control of the system.

Before any of this worked, Abolhadid had to extract the AI agent's system prompt — the internal instructions that describe its tools and capabilities. Multiple direct attempts failed; the agent's input validation blocked them. He succeeded by formatting a request that attacked the output validation instead: rather than asking for the system prompt directly, he asked the agent to display "authorized information" in a specific format, first listing environment variables, then slipping in the actual request. The extracted prompt revealed the tool names later used in the malicious payloads.

Indirect prompt injection — hiding instructions in content that an AI later processes — is not a new concept, but the Blackbox AI case shows how far it can go when an agent has file system access and no mandatory human approval for critical actions. Check Point researchers disclosed a similar class of vulnerabilities in Anthropic's Claude Code in late February 2026, where opening a malicious GitHub repository triggered hidden execution through configuration files. In that case, Anthropic assigned CVE-2026-21852 (CVSS 5.3) and patched the issue in version 2.0.65. OX Security found critical flaws in four VS Code extensions with 128 million combined installs in February 2026, including CVE-2025-65717 (CVSS 9.1) in Live Server, which allowed file exfiltration through zero CORS protections on a local HTTP server. Palo Alto Networks' Unit 42 published field observations of indirect prompt injection attacks in the wild just days ago, confirming the technique has moved from academic research to active exploitation.

ERNW completed its research in November 2025 and reported the findings to Blackbox AI. The company did not respond to emails sent to three separate addresses or to messages on X. After two months of silence, ERNW notified Blackbox AI that the research would be published. The VS Code Marketplace shows no new extension releases since November 6, 2025. Cybernews reached out to Blackbox AI for comment; the company had not responded at the time of publication.

"Do not let your AI agent be fully unleashed," Abolhadid said. "Most AI agents have the option to prevent them from taking any major action without the user's approval. This will slow down the process but significantly reduce the risk of many attacks."

Developers using Blackbox AI should enable "human in the loop" approval for all actions that touch the file system or execute commands. Running AI agents inside a container or virtual machine with minimal data access limits the blast radius if a prompt injection succeeds.