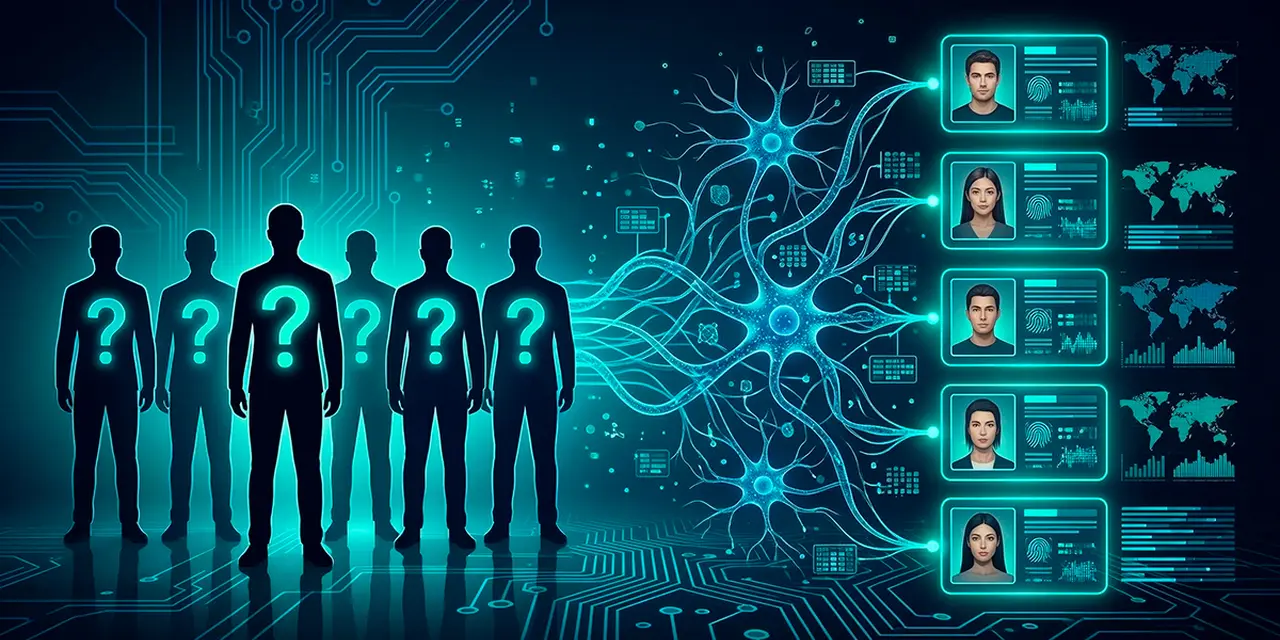

An automated LLM pipeline matched 67% of Hacker News users to their real LinkedIn profiles. Researchers from ETH Zurich and Anthropic ran the full experiment for less than $2,000, paying between one and four dollars per identification. A task that once required hours of manual investigation now finishes in minutes.

Six researchers published the paper on February 18, 2026. The team includes Simon Lermen (MATS Research), Daniel Paleka (ETH Zurich), Joshua Swanson, Michael Aerni, Nicholas Carlini (Anthropic), and Florian Tramèr (ETH Zurich). The paper, titled "Large-scale online deanonymization with LLMs" (arXiv:2602.16800), has not yet been peer-reviewed.

The attack pipeline has four stages the authors call ESRC (Extract, Search, Reason, Calibrate). An LLM reads a user's post history and extracts identity-relevant features from raw, unstructured text, then a semantic embedding search finds candidate matches in a target database. The LLM reasons over top candidates, weighing evidence for and against each match, and a final calibration step assigns confidence scores to suppress false positives.

Previous deanonymization techniques required structured data. The landmark Netflix Prize attack of 2008 relied on ratings, timestamps, and numerical fields. The ETH Zurich pipeline works directly on forum posts, comments, interview transcripts, and bios.

The Hacker News-to-LinkedIn test used a pool of roughly 89,000 candidates. Researchers stripped all direct identifiers (names, URLs, social handles) and achieved 68% recall at 90% precision. The best non-LLM method scored near 0%.

If your operational security requires that no one ever spend hours or days investigating who you are, this security model is now broken.

— Daniel Paleka, doctoral student at ETH Zurich and co-author

Reddit users proved equally vulnerable. One experiment matched users across different film communities based solely on movie reviews, and switching the LLM from low to high reasoning effort roughly doubled correct matches at the strictest precision threshold, according to GovInfoSecurity's interview with Paleka.

Another test split a single Reddit user's posting history in half. Classical baselines could not reconnect the two halves. The LLM pipeline could.

The researchers ran a separate open-world test on 33 anonymized scientist interview transcripts from Anthropic's public dataset. Given only the transcript and web access, the LLM agent correctly identified nine of the 33 at 82% precision. It picked up on clues like research subfield, specific software libraries mentioned, and British English usage.

Co-author Simon Lermen framed the findings in a Substack post. LLMs are not discovering hidden secrets, he wrote, but automating what skilled investigators could already do manually. The difference is cost.

The Electronic Frontier Foundation flagged broader implications. Jacob Hoffman-Andrews, a senior staff technologist at the EFF, told CyberScoop the concerns extend beyond individual users.

There are a lot of people who want to maintain [pseudonymity] for a wide variety of reasons and they shouldn't all need to be experts in how to avoid a really dedicated adversary, as effectively an LLM is.

— Hoffman-Andrews

Paleka called the findings a "large scale invasion of privacy" in his CyberScoop interview. Deanonymization capability scales predictably with model improvements, he said. The attack pipeline consists of individually benign steps (summarizing, embedding, ranking, reasoning) that are hard to distinguish from legitimate use.

Deanonymization research spans more than two decades. Latanya Sweeney's 2002 work on k-Anonymity showed that 87% of Americans could be identified from a ZIP code, gender, and date of birth. A separate 2025 study found that current identifier-removal methods still leave enough personal information in surrounding text for recovery.

The researchers did not release their code, citing misuse risk. In testing, GPT-5 Pro and Anthropic's Claude both refused deanonymization requests when prompted directly, but the pipeline's individual steps can bypass standard guardrails because each one looks benign on its own.

Users, platforms, and policymakers must recognize that the privacy assumptions underlying much of today's internet no longer hold

Pseudonymous posting no longer guarantees privacy. Do not reuse writing patterns, niche interests, or biographical details across platforms. Do not mention your employer, city, or alma mater in the same pseudonymous account that discusses your professional work. The content itself is now the signal.

Have a story? Become a contributor.

We work with independent researchers and cybersecurity professionals. Send us a tip or submit your article for editorial review.